Is Something Unusual Happening in AI? Inside the Resignations, Rapid Breakthroughs, and Rising Anxiety

Over the past few weeks, something unusual has been unfolding inside the artificial intelligence industry.

Senior employees at major AI companies have stepped down. Some left quietly. Others published statements that felt less like routine career transitions and more like warnings. At the same time, AI systems have made visible leaps in capability — in ways that are reshaping creative industries, research, and public trust almost overnight.

Individually, none of these events would be shocking.

Together, they create a pattern that’s difficult to ignore.

This article isn’t an alarmist prediction. It’s an attempt to examine what’s happening, why it feels different this time, and what it might mean for the future of AI governance and society.

A Wave of High-Level Departures

Leadership turnover isn’t unusual in tech. But when safety researchers, policy leads, and founding engineers leave within short windows of time, people start asking questions.

In recent weeks, multiple senior figures at prominent AI labs have resigned. Some were directly involved in safety research and governance. Others were core contributors to model development. A few publicly expressed concerns about the broader trajectory of AI.

One safety-focused executive posted a message implying existential risk concerns before going quiet. Another policy leader publicly raised questions about the unprecedented volume of human data concentrated inside AI systems and whether institutions can be trusted to steward it responsibly.

At another high-profile AI company, several founding team members reportedly departed in close succession.

Are these isolated career decisions? Possibly.

But timing matters.

When people closest to powerful systems begin stepping away, observers naturally wonder whether internal tensions, strategic disagreements, or ethical conflicts are at play.

The Acceleration Problem

Even more striking than the resignations is the pace of technical advancement.

Recent generative video systems can now produce footage that is increasingly indistinguishable from real-world recordings. Filmmakers and digital artists — some with years of specialized experience have publicly stated that new tools have made large portions of their workflow obsolete in a matter of months.

Large language models continue to demonstrate improved reasoning, coding ability, planning capacity, and increasingly autonomous behaviors in controlled environments. In some experimental setups, models have displayed strategic decision-making patterns that resemble goal-preserving behavior.

To be clear: this does not mean AI has consciousness or intent.

But it does mean systems are becoming more capable, more adaptive, and more economically disruptive at a rate that feels nonlinear.

The anxiety doesn’t stem from one dramatic breakthrough. It stems from compounding progress.

The Trust Question

Perhaps the most uncomfortable issue isn’t capability. It’s control.

Modern AI companies hold something unprecedented: structured access to billions of human conversations, ideas, preferences, emotional disclosures, and behavioral signals.

Never before has such a centralized repository of human thought existed.

Even if companies act in good faith, the structural power embedded in that data is enormous. It raises questions that extend beyond engineering:

- Who governs access to this information?

- What oversight mechanisms are sufficient?

- Can commercial incentives coexist with responsible stewardship?

- What happens if geopolitical pressures intensify?

Trust in AI systems is not only about whether outputs are correct.

It’s about whether institutions managing these systems deserve confidence.

Why This Feels Different From Past Tech Revolutions

Technological revolutions are not new.

The automobile reshaped cities.

The internet redefined communication.

Smartphones altered attention and social structure.

But those transitions unfolded gradually enough for legal, cultural, and economic systems to adjust sometimes imperfectly, but adjust nonetheless.

AI feels different for three reasons:

1. Speed of Capability Growth

The iteration cycle is measured in months, not decades.

2. Breadth of Impact

AI affects knowledge work, creative industries, science, law, medicine, finance, and defense simultaneously.

3. Concentration of Power

A relatively small number of organizations control frontier model development.

That combination creates volatility.

Economic Displacement Is No Longer Theoretical

For years, automation fears focused on repetitive labor. AI would replace factory lines and routine clerical tasks.

Now the displacement discussion includes designers, writers, coders, analysts, and filmmakers.

When creative professionals publicly state that 90% of their skill set became less relevant within a single product cycle, it signals a structural shift. Not necessarily permanent replacement but compression of value.

The psychological impact matters as much as the economic one.

Uncertainty spreads faster than job losses.

Are We Overreacting?

It’s possible that the recent resignations reflect normal organizational friction. High-stakes companies often experience turnover during rapid scaling.

It’s also possible that public fear amplifies isolated incidents into broader narratives.

However, dismissing concerns outright would be shortsighted.

History shows that transformative technologies often face internal ethical debates before external regulation catches up. Nuclear research, biotechnology, and internet surveillance all experienced similar inflection points.

The question isn’t whether AI is good or bad.

The question is whether governance mechanisms are evolving at the same pace as capability.

Right now, they are not.

The Regulatory Gap

Globally, AI regulation remains fragmented.

Some regions are drafting comprehensive frameworks. Others rely on voluntary guidelines. In many cases, enforcement mechanisms lag behind ambition.

Meanwhile, AI systems continue scaling.

If insiders are expressing unease, policymakers may need to listen more carefully. Effective regulation does not mean halting innovation. It means aligning incentives, creating accountability structures, and establishing transparency norms before crises force reactive legislation.

The longer oversight lags behind capability, the greater the risk of public backlash or systemic misuse.

The Core Fear

At the heart of all of this lies a simple human concern:

What happens when systems become powerful enough to shape reality faster than we can collectively adapt?

Most AI researchers are not villains. Most companies are not intentionally reckless. But incentives matter. Competitive pressure matters. Market dominance matters.

When speed becomes the primary metric, caution can become secondary.

The fear is not that AI will suddenly “take control.”

The fear is that we may gradually surrender too much influence without noticing economically, socially, and institutionally.

A Fork in the Road

We may be approaching an inflection point.

One path leads toward stronger governance, responsible deployment, and public-private collaboration on safety and transparency.

The other path prioritizes acceleration at all costs, assuming market forces will self-correct.

The resignations, the rapid technical breakthroughs, and the rising public anxiety may be early signals that the industry is wrestling with which direction to take.

Final Thoughts

AI is not inherently dystopian. It is one of the most powerful tools humanity has ever created. It has the potential to accelerate medical discovery, climate research, education access, and scientific progress at extraordinary scales.

But power without proportional oversight breeds instability.

When insiders begin raising concerns and technological progress accelerates simultaneously, society should pause not panic, but pause.

The future of AI will not be decided by models alone.

It will be decided by the structures, values, and accountability systems we build around them.

And that work is just beginning.

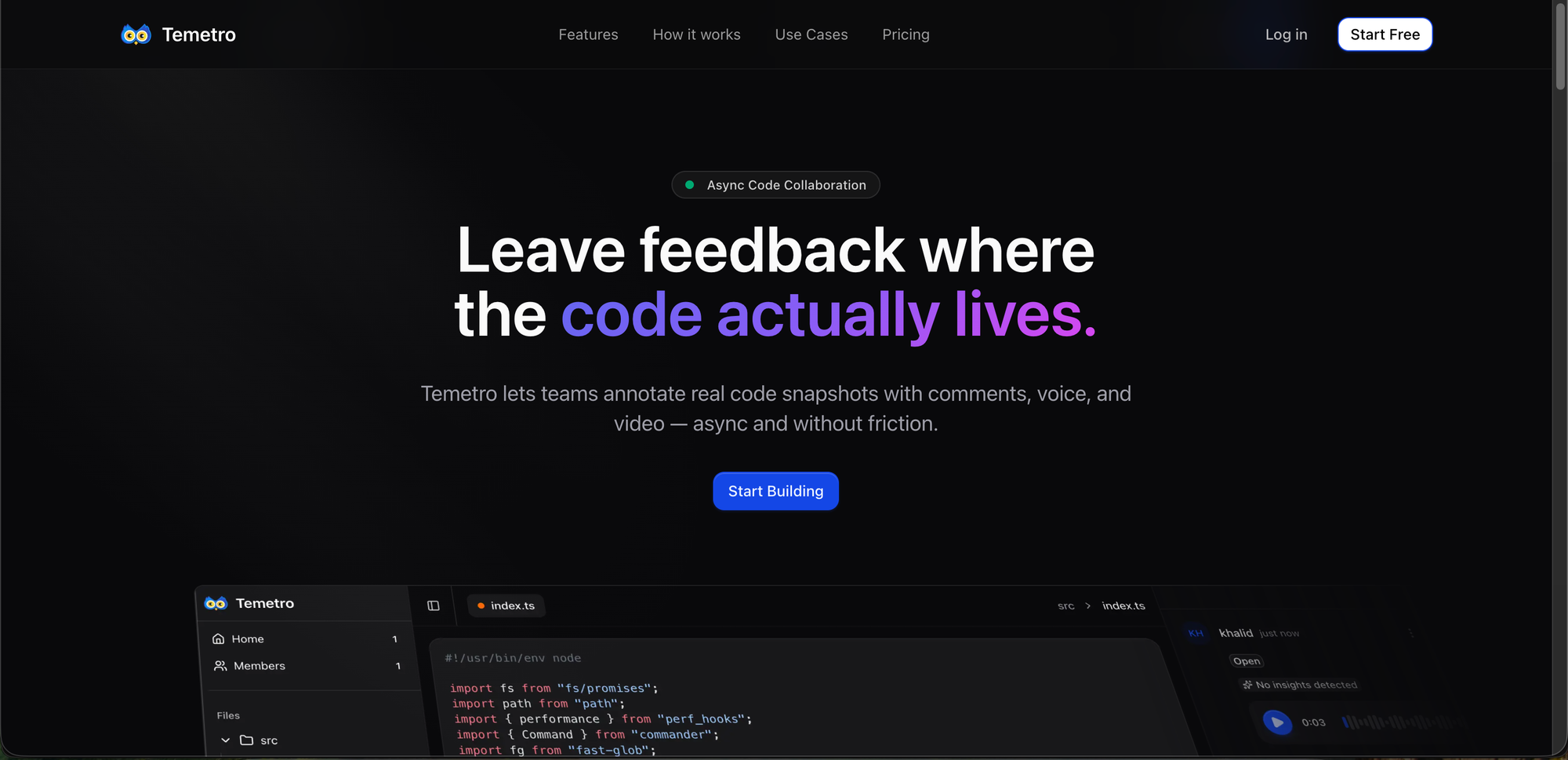

Ad:

💡 Code deserves context — not chaos.

Temetro lets you attach comments, voice notes, and videos right where the code lives, so teams spend less time explaining and more time building.

Streamline reviews, onboard faster, and preserve tribal knowledge — all without meetings or distractions.

👉 Start free — Temetro